Core Libraries

High-performance solutions

Highlights

Easy to Use

User friendly solutions even for complex problems.

High Performance

Alternative Reflection API, enums handling, binary and XML serialization, fast collections for providing better alternatives.

Free

The project is open source and is available on GitHub. Being under a custom permissive license the Core Libraries are free to use even in commercial projects.

No Dependencies

No 3rd party libraries are referenced.

Fully Documented

Online documentation is available with many examples.

Proven

Used by developer companies such as Siemens and evosoft for more than a decade.

Download

In Visual Studio

The preferred way is by the NuGet Package Manager in Visual Studio

- You can use either the Package Manager Console:

PM> Install-Package KGySoft.CoreLibraries

- Or the Package Manger GUI by the Manage NuGet Packages… context menu item of the project.

Direct Download

Alternatively, you can download the package directly from nuget.org.

Source Code

The source is available on GitHub.

Demo Applications

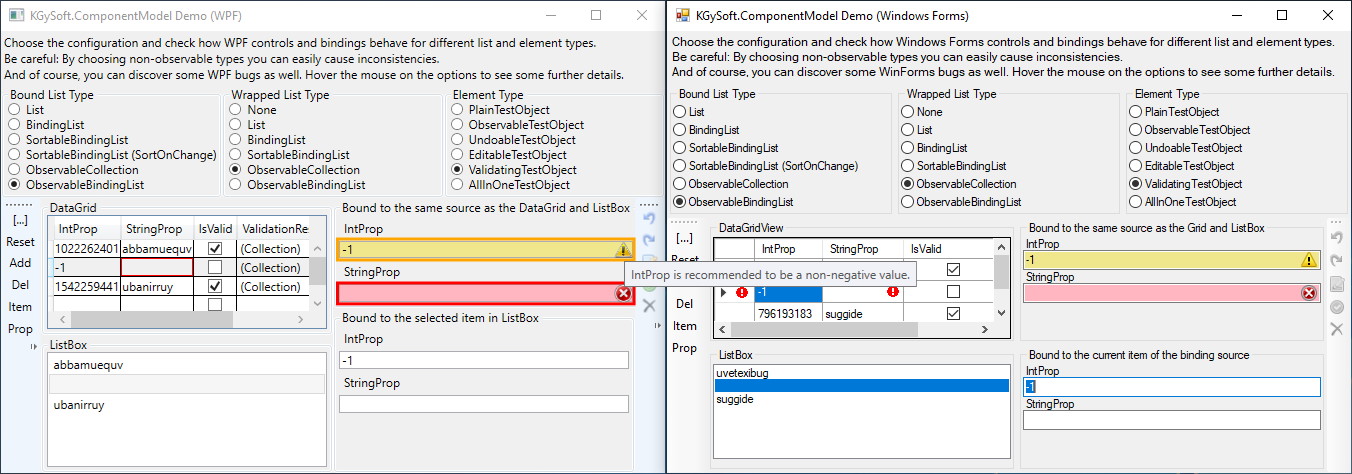

KGySoft.ComponentModel Features Demo

KGySoft.ComponentModelDemo is a desktop application, which focuses mainly on the features of the KGySoft.ComponentModel namespace of KGy SOFT Core Libraries (see also the business objects and command binding examples below). Furthermore, it also provides some useful code samples for using the KGy SOFT Core Libraries in WPF and Windows Forms applications.

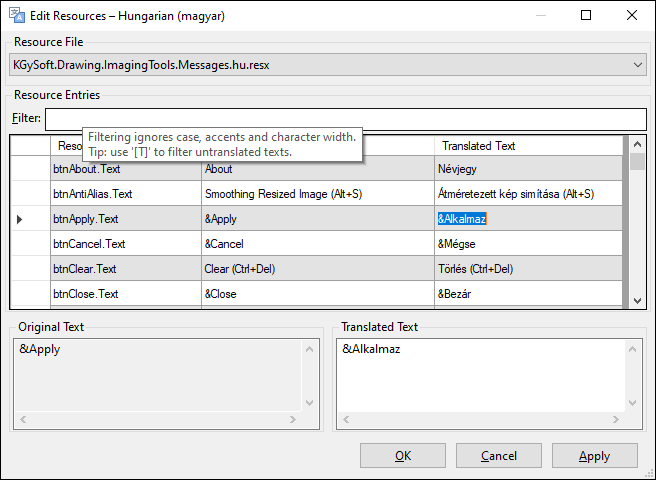

Demonstration of KGySoft.Resources Features

Though KGy SOFT Imaging Tools is not quite a demo, it perfectly demonstrates how to use dynamic resource management in a real application that can generate language resources for non-existing localizations, edit and save the changes in .resx files, and apply them on-the-fly without exiting the application.

💡 Tip: Some simple console application live examples are also available at .NET Fiddle.

Help

Browse the online documentation for examples and detailed descriptions.

Examples

Useful Extensions

In .NET, depending on the targeted platform you can create a ReadOnlySpan<char>/ReadOnlyMemory<char> from a string or a Span<T>/Memory<T> from an array. In KGy SOFT Core Libraries you can use the StringSegment and ArraySection<T> in a very similar manner. They are not just available also for older platforms (starting with .NET Framework 3.5) but provide additional features as well.

// For strings you can use the AsSegment extensions in a similar way to AsSpan/AsMemory: StringSegment segment = "This is a string".AsSegment(10); // Contains "string" without allocating a new string.

StringSegment can be cast to ReadOnlySpan<char> (if available on current platform) but it has also some additional features such as splitting. And the StringSegmentExtensions class have several reader methods, which work on StringSegment type just like the StringReader on strings:

// Splitting a string into segments without allocating new strings:

IList<StringSegment> segments = someDelimitedString.AsSegment().Split('|');

// Or, you can use the reader methods so you don't need to allocate even the list:

// Please note that though StringSegment is immutable, it is passed to the ReadToSeparator extension method

// as a ref parameter so it can "consume" the segment as if it was mutable.

StringSegment rest = someDelimitedString; // note that implicit cast works, too

while (!rest.IsNull)

DoSomenthingWithSegment(rest.ReadToSeparator('|'));

💡 Tip: Try also online.

ArraySection<T>, Array2D<T> and Array3D<T> types work similarly but for arrays. They are not just faster than Memory<T> (whose Span property has some extra cost) but offer some additional features as well:

// So far similar to AsSpan or AsMemory extensions, but this is available on all platforms: ArraySection<byte> section = myByteArray.AsSection(25, 100); // 100 bytes starting at index 25 // But if you wish you can treat it as a 10x10 two-dimensional array: Array2D<byte> as2d = section.AsArray2D(10, 10); // 2D indexing works the same way as for a real multidimensional array. But this is actually faster: byte element = as2d[2, 3]; // Slicing works the same way as for ArraySection/Spans: Array2D<byte> someRows = as2d[1..^1]; // same as as2d.Slice(1, as2d.Height - 2) // Or you can get a simple row: ArraySection<byte> singleRow = as2d[0];

Please note that none of the lines in the example above allocate anything on the heap.

💡 Tip:

ArraySection<T>,Array2D<T>andArray3D<T>types have constructors where you can specify an arbitrary capacity. If the targeted platform supports it, then these use array pooling, which can be much faster than allocating new arrays. Do not forget to release the created instances that were created by the allocator constructors.

If you want to reinterpret the element type of an array, the CastArray<TFrom, TTo>, CastArray2D<TFrom, TTo> and CastArray3D<TFrom, TTo> types can be used similarly to the ones above. Continuing the previous example:

// You can reinterpret the element type if you whish: CastArray<byte, Color32> asColors = myByteArray.Cast<byte, Color32>(); // Now you can access the elements cast to the reinterpreted type: Color32 c = asColors[0]; // Or the reference to them (if supported by the compiler you use): ref Color32 cRef = ref asColors.GetElementReference(0); // Casts also have their 2D/3D counterparts: CastArray2D<byte, Color32> asColors2D = asColors.As2D(height: 4, width: 6); // Same as above, but directly from the section: asColors2D = section.Cast2D<byte, Color32>(4, 6);

ℹ️ Note: Since then .NET Core 2.0 also introduced a

GetValueOrDefaultmethod for theIReadOnlyDictionary<TKey, TValue>interface. But KGy SOFT’sIDictionary<TKey, TValue>.GetValueOrDefaultmethods offer more features, such as using a factory delegate if the default value is expensive to evaluate, using a more specific value type thanTValue(with special handling forIDictionary<string, object>types where you need to specify only one type argument):

// old way:

object obj;

int intValue;

if (dict.TryGetValue("Int", out obj) && obj is int)

intValue = (int)obj;

// C# 7.0 way:

if (dict.TryGetValue("Int", out object o) && o is int i)

intValue = i;

// GetValueOrDefault ways:

intValue = (int)dict.GetValueOrDefault("Int");

intValue = dict.GetValueOrDefault("Int", 0);

intValue = dict.GetValueOrDefault<int>("Int");

💡 Tip: Try also online.

The AddRange extension method allows you to add multiple elements to any ICollection<T> instance. Similarly, InsertRange, RemoveRange and ReplaceRange are available for IList<T> implementations. You might need to check the ICollection<T>.IsReadOnly property before using these methods.

Depending on the actual implementation inserting/removing/setting elements in an IEnumerable type might be possible. See the Try... methods of the EnumerableExtensions class. All of these methods have a Remarks section in the documentation that precisely describes the conditions when the corresponding method can be used successfully.

💡 Tip: Try also online.

// between convertible types: like the Convert class but supports also enums in both ways

result = "123".Convert<int>(); // culture can be specified, default is InvariantCulture

result = ConsoleColor.Blue.Convert<float>();

result = 13.Convert<ConsoleColor>(); // this would fail by Convert.ChangeType

// TypeConverters are used if possible:

result = "AADC78003DAB4906826EFD8B2D5CF33D".Convert<Guid>();

// New conversions can be registered:

result = 42L.Convert<IntPtr>(); // fail

typeof(long).RegisterConversion(typeof(IntPtr), (obj, type, culture) => new IntPtr((long)obj));

result = 42L.Convert<IntPtr>(); // success

// Registered conversions can be used as intermediate steps:

result = 'x'.Convert<IntPtr>(); // char => long => IntPtr

// Collection conversion is also supported:

result = new List<int> { 1, 0, 0, 1 }.Convert<bool[]>();

result = "Blah".Convert<List<int>>(); // works because string is an IEnumerable<char>

result = new[] { 'h', 'e', 'l', 'l', 'o' }.Convert<string>(); // because string has a char[] constructor

result = new[] { 1.0m, 2, -1 }.Convert<ReadOnlyCollection<string>>(); // via the IList<T> constructor

// even between non-generic collections:

result = new HashSet<int> { 1, 2, 3 }.Convert<ArrayList>();

result = new Hashtable { { 1, "One" }, { "Black", 'x' } }.Convert<Dictionary<ConsoleColor, string>>();

// old way:

if (stringValue == "something" || stringValue == "something else" || stringValue == "maybe some other value" || stringValue == "or...")

DoSomething();

// In method:

if (stringValue.In("something", "something else", "maybe some other value", "or..."))

DoSomething();

📝 Note: Since C# 9.0 this exact example above can be simplified also by the new

orkeyword. But theInmethod can be used in other cases as well, such as when the values to compare are not constant expressions. Also, when they are too expensive to evaluate in advance, you can use theInoverload that accepts a collection of delegates.

💡 Tip: Try also online.

// Or FastRandom for the fastest results, or SecureRandom for cryptographically safe results.

var rnd = new Random();

// Next... for all simple types:

rnd.NextBoolean();

rnd.NextDouble(Double.PositiveInfinity); // see also the overloads

rnd.NextString(); // see also the overloads

rnd.NextDateTime(); // also NextDate, NextDateTimeOffset, NextTimeSpan

rnd.NextEnum<ConsoleColor>();

// and NextByte, NextSByte, NextInt16, NextDecimal, etc.

// NextObject: for practically anything. See also GenerateObjectSettings.

rnd.NextObject<Person>(); // custom type

rnd.NextObject<(int, string)>(); // tuple

rnd.NextObject<IConvertible>(); // interface implementation

rnd.NextObject<MarshalByRefObject>(); // abstract type implementation

rnd.NextObject<int[]>(); // array

rnd.NextObject<IList<IConvertible>>(); // some collection of an interface

rnd.NextObject<Func<DateTime>>(); // delegate with random result

// specific type for object (useful for non-generic collections)

rnd.NextObject<ArrayList>(new GenerateObjectSettings { SubstitutionForObjectType = typeof(ConsoleColor) };

// literally any random object

rnd.NextObject<object>(new GenerateObjectSettings { AllowDerivedTypesForNonSealedClasses = true });

💡 Tip: Find more extensions in the online documentation.

High Performance Collections:

A Dictionary-like type with a specified capacity. If the cache is full and new items have to be stored, then the oldest element (or the least recent used one, depending on Behavior) is dropped from the cache.

If an item loader is passed to the constructor, then it is enough only to read the cache via the indexer and the corresponding item will be transparently loaded when necessary.

💡 Tip: To obtain a thread-safe cache accessor it is recommended to use the

ThreadSafeCacheFactoryclass, where you can configure the characteristics of the cache to create. You can create completely lock-free caches, or caches with strict capacity management, expiring values, etc. See the Remarks section of theThreadSafeCacheFactory.Createmethod for details.

// instantiating the cache by a loader method and a capacity of 1000 possible items var personCache = new Cache<int, Person>(LoadPersonById, 1000); // you only need to read the cache: var person = personCache[id]; // If a cache instance is accessed from multiple threads use it from a thread safe accessor. // The item loader can be protected from being called concurrently. // Similarly to ConcurrentDictionary, this is false by default. var threadSafeCache = personCache.GetThreadSafeAccessor(protectItemLoader: false); person = threadSafeCache[id];

💡 Tip: Try also online.

Similar to ConcurrentDictionary but has a bit different characteristic and can be used even in .NET Framework 3.5 where ConcurrentDictionary is not available. It can be a good alternative when a fixed number of keys have to be stored or when the Count property has to be frequently accessed, which is particularly slow at ConcurrentDictionary. See the Remarks section of the ThreadSafeDictionary<TKey, TValue> class for details, including speed comparison of different members.

In .NET still there is no ConcurrentHashSet<T> type. One option is to use a ConcurrentDictionary<TKey, TValue> with ignored values. Another option is to use the ThreadSafeHashSet<T> class, which uses a very similar approach to ThreadSafeDictionary<TKey, TValue>: when used with a limited number of items, or when new items are rarely added compared to a contains check, then it may become practically lock-free.

Acts as a regular IDictionary<string, TValue> but as an IStringKeyedDictionary<TValue> interface implementation, it supports accessing its values also by StringSegment or ReadOnlySpan<char> keys. To use custom string comparison you can pass a StringSegmentComparer instance to the constructors, which allows string comparisons by string, StringSegment and ReadOnlySpan<char> instances.

Fully compatible with List<T> but maintains a dynamic start/end position of the stored elements internally, which makes it very fast when elements are added/removed at the first position. It has also optimized range operations and can return both value type and reference type enumerators depending on the used context.

var clist = new CircularList<int>(Enumerable.Range(0, 1000)); // or by ToCircularList: clist = Enumerable.Range(0, 1000).ToCircularList(); // AddFirst/AddLast/RemoveFirst/RemoveLast clist.AddFirst(-1); // same as clist.Insert(0, -1); (much faster than List<T>) clist.RemoveFirst(); // same as clist.RemoveAt(0); (much faster than List<T>) // if the inserted collection is not ICollection<T>, then List<T> is especially slow here // because it inserts the items one by one and shifts the elements in every iteration clist.InsertRange(0, Enumerable.Range(-500, 500)); // When enumerated by LINQ expressions, List<T> is not so effective because of its boxed // value type enumerator. In these cases CircularList returns a reference type enumerator. Console.WriteLine(clist.SkipWhile(i => i < 0).Count());

Combines the features of IBindingList implementations (such as BindingList<T>) and INotifyCollectionChanged implementations (such as ObservableCollection<T>). It makes it an ideal collection type in many cases (such as in a technology-agnostic View-Model layer) because it can used in practically any UI environments. By default it is initialized by a SortableBindingList<T> but can wrap any IList<T> implementation.

💡 Tip: See more collections in the

KGySoft.Collections,KGySoft.Collections.ObjectModelandKGySoft.ComponentModelnamespaces.

Fast Enum Handling

In .NET Framework some enum operations used to be legendarily slow. Back then I created the static Enum<TEnum> and EnumComparer<TEnum> classes, which provide must faster enum operations than the System.Enum type. Since then, the performance has been radically improved, especially in .NET Core, so the difference became much narrower, though it still exists.

So today the main benefit of using the Enum<TEnum> class is its extra features and maybe the support of ReadOnlySpan<char> type, which is still missing at System.Enum. And of course, if you target older frameworks, which can’t use ReadOnlySpan<char>, you can still use the member overloads that accept StringSegment parameters.

💡 Tip: See the performance comparison in .NET Core and try it online.

Alternative Reflection API

There are four public classes derived from MemberAccessor, which can be used where you would use MemberInfo instances. All of them support generic access in some specialized cases for even better performance. But even the non-generic access, which can be used in all cases, is at least one order of magnitude faster than system reflection. The following table summarizes the relation between the system reflection types and their KGy SOFT counterpart:

| System Type | KGy SOFT Type |

|---|---|

FieldInfo | FieldAccessor |

PropertyInfo | PropertyAccessor |

MethodInfo | MethodAccessor |

ConstructorInfo, Activator | CreateIstanceAccessor |

💡 Tip: See the links in the table above for performance comparison examples.

If convenience is priority, then the Reflector class offers every functionality you need to use for reflection. While the accessors above can to be obtained by a MemberInfo instance, the Reflector can be used even by name. The following example demonstrates this for methods:

// Any method by MethodInfo:

MethodInfo method = typeof(MyType).GetMethod("MyMethod");

result = Reflector.InvokeMethod(instance, method, param1, param2); // by Reflector

result = method.Invoke(instance, new object[] { param1, param2 }); // the old (slow) way

result = MethodAccessor.GetAccessor(method).Invoke(instance, param1, param2); // by accessor (fast)

// Instance method by name (can be non-public, even in base classes):

result = Reflector.InvokeMethod(instance, "MethodName", param1, param2);

// Static method by name (can be non-public, even in base classes):

result = Reflector.InvokeMethod(typeof(MyType), "MethodName", param1, param2);

// Even generic methods are supported:

result = Reflector.InvokeMethod(instance, "MethodName", new[] { typeof(GenericArg) }, param1, param2);

// If you are not sure whether a method by the specified name exists use TryInvokeMethod:

bool invoked = Reflector.TryInvokeMethod(instance, "MethodMaybeExists", out result, param1, param2);

📝 Note:

Try...methods return false if a matching member with the given name/parameters cannot be found. However, if a member could be successfully invoked, which threw an exception, then this exception will be thrown further.

Serialization

🔒 Security Note: If the serialization stream may come from an untrusted source (e.g. remote service, file or database), then make sure you enable the

SafeModefor the deserialization. By doing so all custom types that are stored by assembly identity or by full name must be explicitly declared as expected types (this is not needed for natively supported types, which are not stored by name). Without using this option (or some additional security for the serialization stream) binary serialization is safe only if both the serialization and deserialization happens in the same process, such as creating in-memory snapshots of objects (e.g. for undo/redo functionality) or to create bitwise deep clones. See the security notes at the Remarks section of theBinarySerializationFormatterclass for more details.

BinarySerializationFormatter serves the same purpose as BinaryFormatter but it fixes a lot of security concerns BinaryFormatter suffered from and in most cases produces much compact serialized data with a better performance. It supports many core types natively, including many collections and newer basic types that are not marked serializable anymore (eg. Half, Rune, DateOnly, TimeOnly, etc.). Native support means that serialization of those types does not involve storing assembly and type names at all, which ensures very compact sizes as well as their safe deserialization on every possible platform. Apart from the natively supported types it works similarly to BinaryFormatter: uses recursive serialization of fields and supports the full binary serialization infrastructure including ISerializable, IDeserializationCallback, IObjectReference, serialization method attributes, binder and surrogates support. Please note though the in safe mode no custom binders and surrogates are allowed to use.

Even if used in a secure environment or on a cryptographically secured channel, binary serialization of custom types is not quite recommended when communicating between remote entities, because by default custom serialization relies on private implementation (ie. field names). In such cases use messages created exclusively from the natively supported types (see them at BinarySerializationFormatter) so it can be used like some ProtoBuf but with much more available predefined types. If you really need to use custom types between remote endpoints, then it is recommended to use message types that can be completely restored by public fields and properties so you can use a text-based serializer, eg. an XML serializer.

Binary serialization functions are available via the static BinarySerializer class and by the BinarySerializationFormatter type.

💡 Tip: Try also online.

// Simple way: by the static BinarySerializer class byte[] rawData = BinarySerializer.Serialize(instance); // to byte[] BinarySerializer.SerializeToStream(stream, instance); // to Stream BinarySerializer.SerializeByWriter(writer, instance); // by BinaryWriter // or explicitly by a BinarySerializationFormatter instance: rawData = new BinarySerializationFormatter().Serialize(instance); // Deserialization: obj = BinarySerializer.Deserialize<MyClass>(rawData); // from byte[] obj = BinarySerializer.DeserializeFromStream<MyClass>(stream); // from Stream obj = BinarySerializer.DeserializeByReader<MyClass>(reader); // by BinaryReader

The BinarySerializationFormatter supports many types and collections natively (see the link), which has two benefits: these types are serialized without any assembly information and the result is very compact as well. Additionally, you can use the BinarySerializationOptions.OmitAssemblyQualifiedNames flag to omit assembly information on serialization, which reduces the size of the output even more, and more importantly, it makes impossible to load assemblies during the deserialization even if the BinarySerializationOptions.SafeMode is not used during the deserialization.

🔒 Security Note: KGy SOFT’s

XmlSerializeris a polymorphic serializer. If the serialized content comes from an untrusted source make sure you use itsDeserializeSafe/DeserializeContentSafemethods that disallow loading assemblies during the deserialization even if types are specified with their assembly qualified names, and make it necessary to name every custom type that can be expected in the serialization XML. See the security notes at the Remarks section of theXmlSerializerclass for more details.

Unlike binary serialization, which is meant to save the bitwise content of an object, the XmlSerializer can save and restore the public properties and fields. Meaning, it cannot guarantee that the original state of an object can be fully restored unless it is completely exposed by public members. The XmlSerializer can be a good choice for saving configurations or components whose state can be edited in a property grid, for example.

Therefore XmlSerializer supports several System.ComponentModel attributes and techniques such as TypeConverterAttribute, DefaultValueAttribute, DesignerSerializationVisibilityAttribute and even the ShouldSerialize... methods.

var person = ThreadSafeRandom.Instance.NextObject<Person>(); var options = XmlSerializationOptions.RecursiveSerializationAsFallback; // serializing into XElement XElement element = XmlSerializer.Serialize(person, options); var clone = XmlSerializer.DeserializeSafe<Person>(element); // serializing into file/Stream/TextWriter/XmlWriter are also supported: An XmlWriter will be used var sb = new StringBuilder(); XmlSerializer.Serialize(new StringWriter(sb), person, options); clone = XmlSerializer.DeserializeSafe<Person>(new StringReader(sb.ToString())); Console.WriteLine(sb);

And the serialization:

💡 Tip: Try also online.

var person = ThreadSafeRandom.Instance.NextObject<Person>(); var options = XmlSerializationOptions.RecursiveSerializationAsFallback; // serializing into XElement XElement element = XmlSerializer.Serialize(person, options); var clone = XmlSerializer.DeserializeSafe<Person>(element); // serializing into file/Stream/TextWriter/XmlWriter are also supported: An XmlWriter will be used var sb = new StringBuilder(); XmlSerializer.Serialize(new StringWriter(sb), person, options); clone = XmlSerializer.DeserializeSafe<Person>(new StringReader(sb.ToString())); Console.WriteLine(sb);

If a type has a non-default constructor it still can be deserialized after manually

creating an empty instance:

public class MyComponent

{

// there is no default constructor

public MyComponent(Guid id) => Id = id;

// read-only property: will not be serialized unless forced by the

// ForcedSerializationOfReadOnlyMembersAndCollections option

public Guid Id { get; }

// this tells the serializer to allow recursive serialization for this non-common type

// without using the RecursiveSerializationAsFallback option

[DesignerSerializationVisibility(DesignerSerializationVisibility.Content)]

public Person Person { get; set; }

}

When serializing such a type we need to emit a root element explicitly and on deserialization we need to create an empty MyComponent instance manually:

var instance = new MyComponent(Guid.NewGuid()) { Person = person };

// serialization (now into XElement but XmlWriter is also supported):

var root = new XElement("SomeRootElement");

XmlSerializer.SerializeContent(root, instance);

// deserialization (now from XElement but XmlReader is also supported):

var cloneWithNewId = new MyComponent(Guid.NewGuid());

XmlSerializer.DeserializeContent(root, cloneWithNewId);

Dynamic Resource Management

💡 Tip: For a real-life example see also the KGy SOFT Imaging Tools application that supports creating and applying new localizations on-the-fly, from within the application.

The KGy SOFT Core Libraries contain numerous classes for working with resources directly from .resx files. Some classes can be familiar from the .NET Framework. For example, ResXResourceReader, ResXResourceWriter and ResXResourceSet are reimplemented by referencing only the core system assemblies (the original versions of these reside in System.Windows.Forms.dll, which cannot be used in all circumstances) and they got a bunch of improvements at the same time. For example, ResXResourceSet is now a read-write collection and the changes can be saved in a new .resx file (see the links above for details and comparisons and examples).

On top of those, KGy SOFT Core Libraries introduces a sort of new types that can be used the same way as a standard ResourceManager class:

ResXResourceManagerworks the same way as the regularResourceManagerbut works on .resx files instead of compiled resources and supports adding and saving new resources, .resx metadata and assembly aliases.- The

HybridResourceManageris able to work both with compiled and .resx resources even at the same time: it can be used to override the compiled resources with .resx content. - The

DynamicResourceManagercan be used to generate new .resx files automatically for languages without a localization. The KGy SOFT Libraries also useDynamicResourceManagerinstances to maintain their resources. The library assemblies are compiled only with the English resources but any consumer library or application can enable the .resx expansion for any language.

💡 Tip: See the Remarks section of the

KGySoft.Resourcesnamespace description, which may help you to choose the most appropriate class for your needs.

// Just pick a language for your application

LanguageSettings.DisplayLanguage = CultureInfo.GetCultureInfo("de-DE");

// Opt-in using .resx files (for all `DynamicResourceManager` instances, which are configured to obtain

// their configuration from LanguageSettings):

LanguageSettings.DynamicResourceManagersSource = ResourceManagerSources.CompiledAndResX;

// When you access a resource for the first time for a new language, a new resource set will be generated.

// This is saved automatically when you exit the application

Console.WriteLine(PublicResources.ArgumentNull);

The example above will print a prefixed English message for the first time: [T]Value cannot be null.. Find the newly saved .resx file and look for the untranslated resources with the [T] prefix. After saving an edited resource file the example will print the localized message.

See a complete example at the

LanguageSettinsclass.

See the step-by step description at the DynamicResourceManager class.

Business Objects

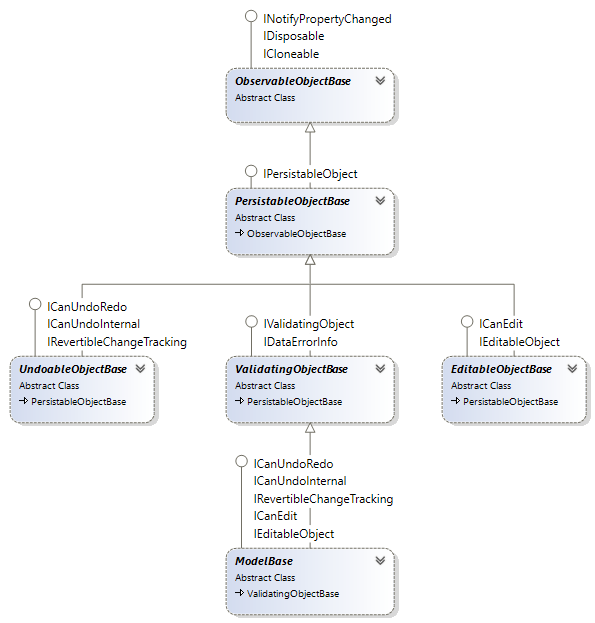

The KGySoft.ComponentModel namespace contains several types that can be used as base type for model classes, view-model objects or other kind of business objects:

ObservableObjectBase: The simplest class, supports change notification via theINotifyPropertyChangedinterface and can tell whether any of the properties have been modified. Provides protected members for maintaining properties.PersistableObjectBase: Extends theObservableObjectBaseclass by implementing theIPersistableObjectinterface, which makes possible to access and manipulate the internal property storage.UndoableObjectBase: Adds step-by-step undo/redo functionality to thePersistableObjectBasetype. This is achieved by implementing a flexibleICanUndoRedointerface. Implements also the standardSystem.ComponentModel.IRevertibleChangeTrackinginterface.EditableObjectBase: Adds committable and revertible editing functionality to thePersistableObjectBasetype. The editing sessions can be nested. This is achieved by implementing a flexibleICanEditinterface but implements also the standardSystem.ComponentModel.IEditableObjectinterface, which is already supported by multiple already existing controls in the various graphical user environments.ValidatingObjectBase: Adds business validation features to thePersistableObjectBasetype. This is achieved by implementing a flexibleIValidatingObjectinterface, which provides multiple validation levels for each properties. Implements also the standardSystem.ComponentModel.IDataErrorInfointerface, which is the oldest and thus the most widely supported standard validation technique in the various GUI frameworks.ModelBase: Unifies the features of all of the classes above.

The following example demonstrates a possible model class with validation:

public class MyModel : ValidatingObjectBase

{

// A simple integer property (with zero default value).

// Until the property is set no value is stored internally.

public int IntProperty { get => Get<int>(); set => Set(value); }

// An int property with default value. Until the property is set the default will be returned.

public int IntPropertyCustomDefault { get => Get(-1); set => Set(value); }

// If the default value is a complex one, which should not be evaluated each time

// you can provide a factory for it.

// When this property is read for the first time without setting it before

// the provided delegate will be invoked and the returned default value is stored without triggering

// the PropertyChanged event.

public MyComplexType ComplexProperty { get => Get(() => new MyComplexType()); set => Set(value); }

// You can use regular properties to prevent raising the events

// and not to store the value in the internal storage.

// The OnPropertyChanged method still can be called explicitly to raise the PropertyChanged event.

public int UntrackedProperty { get; set; }

public int Id { get => Get<int>(); set => Set(value); }

public string Name { get => Get<string>(); set => Set(value); }

protected override ValidationResultsCollection DoValidation()

{

var result = new ValidationResultsCollection();

// info

if (Id == 0)

result.AddInfo(nameof(Id), "This will be considered as a new object when saved");

// warning

if (Id < 0)

result.AddWarning(nameof(Id), $"{nameof(Id)} is recommended to be greater or equal to 0.");

// error

if (String.IsNullOrEmpty(Name))

result.AddError(nameof(Name), $"{nameof(Name)} must not be null or empty.");

return result;

}

}

💡 Tip: See the KGySoft.ComponentModelDemo repository to try business objects in action

Command Binding

KGy SOFT Core Libraries contain a simple, technology-agnostic implementation of the Command pattern. Commands are actually advanced event handlers. The main benefit of using commands is that they can be bound to multiple sources and targets, and unsubscription from sources is handled automatically when the binding is disposed (no more memory leaks due to delegates and you don’t even need to use heavy-weight weak events).

A command is represented by the ICommand interface (see some examples also in the link). There are four pairs of predefined ICommand implementations that can accept delegate handlers:

public static class MyCommands

{

public static readonly ICommand PasteCommand = new TargetedCommand<TextBoxBase>(tb => tb.Paste());

public static readonly ICommand ReplaceTextCommand = new TargetedCommand<Control, string>((target, value) => target.Text = value);

}

To use a command it has to be bound to one or more sources (and to some targets if the command is targeted). To create a binding the CreateBinding extension method can be used:

var binding = MyCommands.PasteCommand.CreateBinding(menuItemPaste, "Click", textBox);

// Alternative way by fluent syntax: (also allows to add multiple sources)

binding = MyCommands.PasteCommand.CreateBinding()

.AddSource(menuItemPaste, nameof(menuItemPaste.Click))

.AddSource(buttonPaste, nameof(buttonPaste.Click))

.AddTarget(textBox);

// by disposing the binding every event subscription will be removed

binding.Dispose();

💡 Tip: Try also online.

If you create your bindings by a CommandBindingsCollection (or add the created bindings to it), then all of the event subscriptions of every added binding can be removed at once when the collection is disposed.

public class MyView : ViewBase

{

private CommandBindingsCollection bindings = new CommandBindingsCollection();

private void InitializeView()

{

bindings.Add(MyCommands.PasteCommand)

.AddSource(menuItemPaste, nameof(menuItemPaste.Click))

.AddSource(buttonPaste, nameof(buttonPaste.Click))

.AddTarget(textBox);

// [...] more bindings

}

protected override void Dispose(bool disposing)

{

if (disposing)

bindings.Dispose(); // releases all of the event subscriptions of every added binding

base.Dispose(disposing);

}

}

An ICommand instance is stateless by itself. However, the created ICommandBinding has a State property, which is an ICommandState instance containing any arbitrary dynamic properties of the binding. Actually you can treat this object as a dynamic instance and add any properties you want. It has one predefined property, Enabled, which can be used to enable or disable the execution of the command.

// The command state can be pre-created and passed to the binding creation

var pasteCommandState = new CommandState { Enabled = false };

// passing the state object when creating the binding

var pasteBinding = bindings.Add(MyCommands.PasteCommand, pasteCommandState)

.AddSource(menuItemPaste, nameof(menuItemPaste.Click))

.AddSource(buttonPaste, nameof(buttonPaste.Click))

.AddTarget(textBox);

// ...

// enabling the command

pasteCommandState.Enabled = true;

// or:

pasteBinding.State.Enabled = true;

As you could see in the previous example the Enabled state of the command can set explicitly (push) any time via the ICommandState object.

On the other hand, it is possible to subscribe the ICommandBinding.Executing event, which is raised when a command is about to be executed. By this event the binding instance checks the enabled status (poll) and allows the subscriber to change it.

// Handling the ICommandBinding.Executing event. Of course, it can be a command, too... how fancy :)

public static class MyCommands

{

// [...]

// this time we define a SourceAwareCommand because we want to get the event data

public static readonly ICommand SetPasteEnabledCommand =

new SourceAwareCommand<ExecuteCommandEventArgs>(OnSetPasteEnabledCommand);

private static void OnSetPasteEnabledCommand(ICommandSource<ExecuteCommandEventArgs> sourceData)

{

// we set the enabled state based on the clipboard

sourceData.EventArgs.State.Enabled = Clipboard.ContainsText();

}

}

// ...

// and the creation of the bindings:

// the same as previously, except that we don't pass a pre-created state this time.

var pasteBinding = bindings.Add(MyCommands.PasteCommand)

.AddSource(menuItemPaste, nameof(menuItemPaste.Click))

.AddSource(buttonPaste, nameof(buttonPaste.Click))

.AddTarget(textBox);

// A command binding to set the Enabled state of the Paste command on demand (poll). No targets this time.

bindings.Add(MyCommands.SetPasteEnabledCommand)

.AddSource(pasteBinding, nameof(pasteBinding.Executing));

The possible drawback of the polling way is that Enabled is set only in the moment when the command is executed. But if the sources can represent the disabled state (eg. visual elements may turn gray), then the explicit way may provide a better visual feedback. Just go on with reading…

An ICommandState can store not just the predefined Enabled state but also any other data. If these states can be rendered meaningfully by the command sources (for example, when Enabled is false, then a source button or menu item can be disabled), then an ICommandStateUpdater can be used to apply the states to the sources. If the states are properties on the source, then the PropertyCommandStateUpdater can be added to the binding:

// we can pass a string-object dictionary to the constructor, or we can treat it as a dynamic object.

var pasteCommandState = new CommandState(new Dictionary<string, object>

{

{ "Enabled", false }, // can be set also this way - must have a bool value

{ "Text", "Paste" },

{ "HotKey", Key.Control | Key.V },

{ "Icon", Icons.PasteIcon },

});

// as now we add a state updater, the states will be immediately applied to the sources

bindings.Add(MyCommands.PasteCommand, pasteCommandState)

.AddStateUpdater(PropertyCommandStateUpdater.Updater) // to sync back state properties to sources

.AddSource(menuItemPaste, nameof(menuItemPaste.Click))

.AddSource(buttonPaste, nameof(buttonPaste.Click))

.AddTarget(textBox);

// This will enable all sources now (if they have an Enabled property):

pasteCommandState.Enabled = true;

// We can set anything by casting the state to dynamic or via the AsDynamic property.

// It is not a problem if a source does not have such a property. You can chain multiple updaters to

// handle special cases. If an updater fails, the next one is tried (if any).

pasteCommandState.AsDynamic.ToolTip = "Paste text from the Clipboard";

In WPF you can pass a parameter to a command, whose value is determined when the command is executed. KGy SOFT Libraries also have parameterized command support:

bindings.Add(MyCommands.ReplaceTextCommand)

.WithParameter(() => GetNewText()) // the delegate will be called when the command is executed

.AddSource(menuItemPaste, nameof(menuItemPaste.Click))

.AddSource(buttonPaste, nameof(buttonPaste.Click))

.AddTarget(textBox);

💡 Tip: It is recommended to specify the parameter callback before adding any sources to avoid the possible issues if there is any chance that the source can be triggered before completing the initialization.

Actually also the AddTarget method can accept a

delegate, which is invoked just before executing the command. The

difference between targets and parameters is that whenever triggering

the command the parameter value is evaluated only once but the ICommand.Execute

method is invoked as many times as many targets are added to the

binding (but at least once if there are no targets) using the same

parameter value.

But if there are no multiple targets, then either a target or a parameter can be used interchangeably. Use whatever is more correct semantically. If the parameter/target can be determined when creating the binding (no callback is needed to determine its value), then it is probably rather a target than a parameter.

Most UI frameworks have some advanced property binding, supporting fancy things such as collections and paths. Though they can be perfectly used in most cases they can have also some drawbacks. For example, WPF data binding (similarly to other XAML based frameworks) can be used with DependencyProperty targets of DependencyObject instances only; and Windows Forms data binding works only for IBindableComponent implementations.

For environments without any binding support or for the aforementioned exceptional cases KGy SOFT’s command binding offers a very simple one-way property binding by an internally predefined command exposed by the Command.CreatePropertyBinding and CommandBindingsCollection.AddPropertyBinding methods. The binding works for any sources, which implement the INotifyPropertyChanged interface, or, if they have a <PropertyName>Changed event for the property to bind. The target object can be anything as long as the target property can be set.

In the following example our view-model is a ModelBase (see also above), which implements INotifyPropertyChanged.

// ViewModel:

public class MyViewModel : ModelBase // ModelBase implements INotifyPropertyChanged

{

public string Text { get => Get<string>(); set => Set(value); }

}

// View: assuming we have a ViewBase<TDataContext> class with DataContext and CommandBindings properties

public class MyView : ViewBase<MyViewModel>

{

private void InitializeView()

{

CommandBindingsCollection bindings = base.CommandBindings;

MyViewModel viewModel = base.DataContext;

// [...] the usual bindings.Add(...) lines here

// Adding a simple property binding (uses a predefined command internally):

bindings.AddPropertyBinding(

viewModel, "Text", // source object and property name

"Text", textBox, labelTextBox); // target property name and target object(s)

// a formatting can be added if types (or just the values) of the properties should be different:

bindings.AddPropertyBinding(

viewModel, "Text", // source object and property name

"BackColor", // target property name

value => value == null ? Colors.Yellow : SystemColors.WindowColor, // string -> Color

textBox); // target object(s)

}

}

Performance Measurement

You can use the Profiler class to inject measurement sections as using blocks into your code base:

💡 Tip: Try also online.

const string category = "Example";

using (Profiler.Measure(category, "DoBigTask"))

{

// ... code ...

// measurement blocks can be nested

using (Profiler.Measure(category, "DoSmallTask"))

{

// ... more code ...

}

}

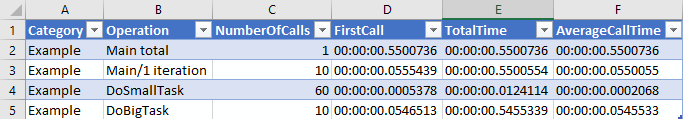

The number of hits, execution times (first, total, average) are tracked and can be obtained explicitly or you can let them to be dumped automatically into an .xml file.

<?xml version="1.0" encoding="utf-8"?> <ProfilerResult> <item Category="Example" Operation="Main total" NumberOfCalls="1" FirstCall="00:00:00.5500736" TotalTime="00:00:00.5500736" AverageCallTime="00:00:00.5500736" /> <item Category="Example" Operation="Main/1 iteration" NumberOfCalls="10" FirstCall="00:00:00.0555439" TotalTime="00:00:00.5500554" AverageCallTime="00:00:00.0550055" /> <item Category="Example" Operation="DoSmallTask" NumberOfCalls="60" FirstCall="00:00:00.0005378" TotalTime="00:00:00.0124114" AverageCallTime="00:00:00.0002068" /> <item Category="Example" Operation="DoBigTask" NumberOfCalls="10" FirstCall="00:00:00.0546513" TotalTime="00:00:00.5455339" AverageCallTime="00:00:00.0545533" /> </ProfilerResult>

The result .xml can be imported easily into Microsoft Excel:

For more direct operations you can use the PerformanceTest and PerformanceTest<TResult> classes to measure operations with void and non-void return values, respectively.

new PerformanceTest

{

TestName = "System.Enum vs. KGySoft.CoreLibraries.Enum<TEnum>",

Iterations = 1_000_000,

Repeat = 2

}

.AddCase(() => ConsoleColor.Black.ToString(), "Enum.ToString")

.AddCase(() => Enum<ConsoleColor>.ToString(ConsoleColor.Black), "Enum<TEnum>.ToString")

.DoTest()

.DumpResults(Console.Out);

💡 Tip: Try also online.

The result of the DoTest method can be processed either manually or can be dumped in any TextWriter. The example above dumps it on the console and produces a result similar to this one:

==[System.Enum vs. KGySoft.CoreLibraries.Enum<TEnum> Results]================================================ Iterations: 1 000 000 Warming up: Yes Test cases: 2 Repeats: 2 Calling GC.Collect: Yes Forced CPU Affinity: 2 Cases are sorted by time (quickest first) -------------------------------------------------- 1. Enum<TEnum>.ToString: average time: 26,60 ms #1 29,40 ms <---- Worst #2 23,80 ms <---- Best Worst-Best difference: 5,60 ms (23,55%) 2. Enum.ToString: average time: 460,78 ms (+434,18 ms / 1 732,36%) #1 456,18 ms <---- Best #2 465,37 ms <---- Worst Worst-Best difference: 9,19 ms (2,01%)

If you need to use parameterized tests you can simply derive the PerformanceTestBase<TDelegate, TResult> class. Override the OnBeforeCase method to reset the parameter for each test cases. For example, this is how you can use a prepared Random instance in a performance test:

public class RandomizedPerformanceTest<T> : PerformanceTestBase<Func<Random, T>, T>

{

private Random random;

protected override T Invoke(Func<Random, T> del) => del.Invoke(random);

protected override void OnBeforeCase() => random = new Random(0); // resetting with a fix seed

}

💡 Tip: Try also online.

And then a properly prepared Random instance will be an argument of your test cases:

new RandomizedPerformanceTest<string> { Iterations = 1_000_000 }

.AddCase(rnd => rnd.NextEnum<ConsoleColor>().ToString(), "Enum.ToString")

.AddCase(rnd => Enum<ConsoleColor>.ToString(rnd.NextEnum<ConsoleColor>()), "Enum<TEnum>.ToString")

.DoTest()

.DumpResults(Console.Out);

License

KGy SOFT Core Libraries are under the KGy SOFT License 1.0, which is a permissive GPL-like license. It allows you to copy and redistribute the material in any medium or format for any purpose, even commercially. The only thing is not allowed is to distribute a modified material as yours: though you are free to change and re-use anything, do that by giving appropriate credit. See the LICENSE file for details.